Post Archive

PxrLMLayer Blending is Broken

Layers only care about themselves.

NOTE: This problem has been fixed by Pixar and released in RenderMan 20.7. It was a interesting bug, so read on anyways!

While working on Shadows in the Grass I've been exploring building RenderMan shaders out of multiple PxrLMLayers. I've been having issues composing shaders of multiple translucent layers.

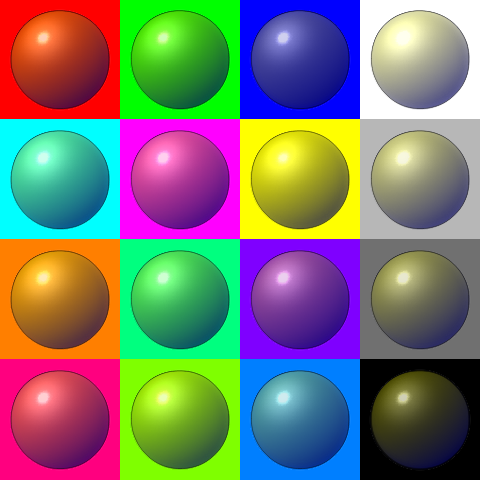

I've reduced it to a very simple case:

Example 1 (top stripe): I have a base diffuse material with an overlay. The base material is black, and the overlay is red with a gradient mask. Looks like I would expect.

Example 2 (middle stripe): I have a base diffuse material, and two overlays on top. The base material is black, the first overlay is green with a mask of 0, and the second overlay is red with a gradient mask.

I would expect the middle stripe to appear like the top stripe, but the green is somehow getting into the calculation despite its mask being zero.

When to NOT Linearize Your Textures

Although most images are non-linear, sometimes you should leave them that way.

Knowing how and when to deal with colour spaces in your textures files is crucial to a realistic rendering workflow.

In short: since monitors are usually non-linear devices, image files are generally stored with the inverse non-linearity baked in (the general example of which is sRGB). Since rendering calculations work in a linear space, you must convert your images back to the linear space before using them otherwise your renders will not be accurate.

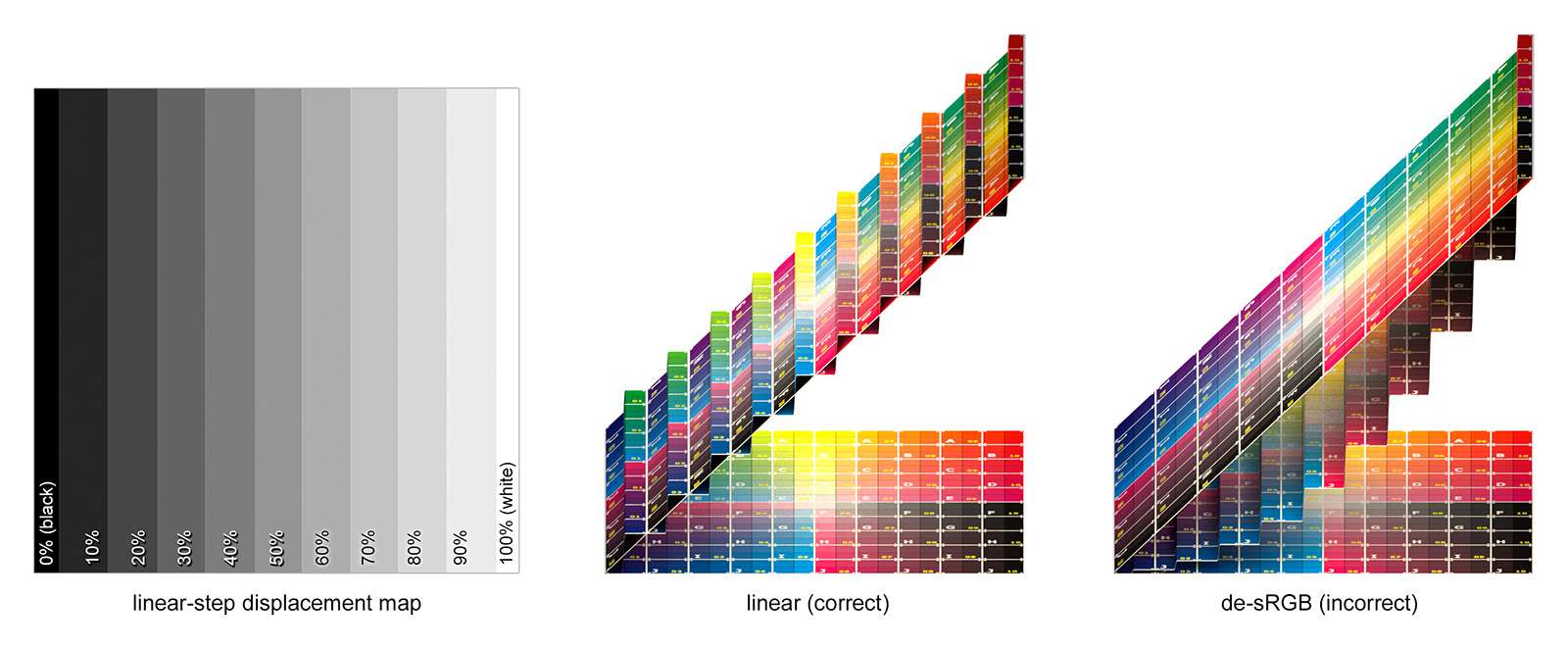

While it is key that you linearize your colour textures (e.g. diffuse, specular colour, etc.), you must be very thoughtful of doing the same with your data textures (e.g. displacement, bump, etc.).

For example, lets look at a displacement:

Render Heatmaps

Are pictures worth 1000 statistics?

Debugging rendering issues can be particularly problematic. Many times, the efficiency of standard debugging procedures (e.g. printing intermediate values, or using a debugger) fall apart at the sheer volume of data they will produce when you are calling them millions of time per frame.

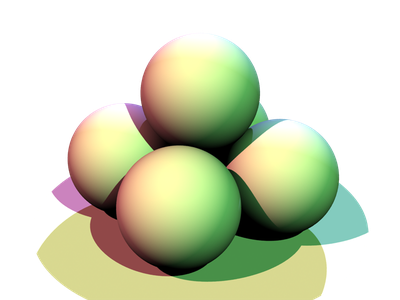

Often, intermediate values can be dumped out via an AOV (i.e. to another image), and inspected as an image. For example, if you were interested in how long various parts of the image are taking to render vs. the others, you could create a heatmap such as:

In this particular example, however, there are a few drawbacks:

- RSL does not have any timing functions;

- every shader would need to be modified in order to collect these stats; and

- you generally only receive information from the front-most surface.

I set out to resolve those issues.

RenderMan Textures from Python

In order to better understand the guts of Python and RenderMan, in the past I have implemented a number of proof of concept projects extending or embedding each. Previously, I combined my efforts by embedding Python into RenderMan as an RSL shadeop so that shaders could be written in Python!

Unfortunately, that code is lost to the ages, so I decided to revisit my efforts and produce something that could actually have applications: using Python as a source of texture data for RenderMan.

The Gooch Lighting Model

"A Non-Photorealistic Lighting Model For Automatic Technical Illustration"

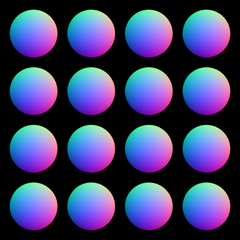

I've recently been toying with the Gooch et al. (1998) non-photorealistic lighting model. Unfortunately, the nature of the project does not permit me to post any of the "real" results quite yet, but some of the tests have a nice look to them all on their own.

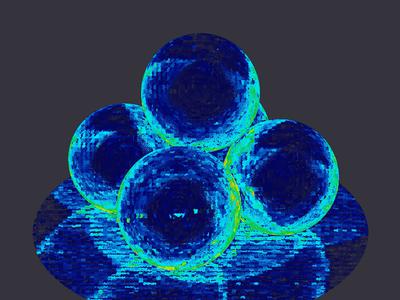

My implementation takes a normal map and colour map, e.g.:

This is the result from those inputs:

New Demo Reel

For the first time since 2008, I have a new demo reel. This one finally has a quick breakdown of Blind Spot, and a lot of awesome shots from The Borgias.

There are no more posts tagged "rendering".